Ritwik Sharma

Source: Freepik

Abstract: This piece critiques Indian courts’ commercial-rights-centric approach to sexually explicitdeepfake takedown cases, highlighting its gendered consequences. Analysing nine judgmentsthrough pie-charts, it argues that reliance on personality and publicity rights sidelines privacyand dignity under Article 21, disadvantaging non-celebrity women. It contends thatunauthorised sexual deepfakes constitute technology-facilitated gender-based violencewarranting Article 21-based relief through its horizontal application. The article proposesregulatory reforms, including intermediary liability and penal enforcement under the IT Act,2000, to curb AI-powered ‘nudifying websites’ and ensure inclusive, privacy-centeredjudicial protection in the cyberspace

Introduction

In January 2026, the New York Times reported that the AI chatbot Grok generated and posted up to 1.8 million sexualized images of women in less than 10 days, which was 41% of all images posted. Such statistics show a worrying trend of the rise in cases of revenge pornography often created using websites selling ‘nudifying services’ that employ deepfake technology enabled by Artificial Intelligence (‘AI’). While India has notified the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025 (‘IT Rules, 2025’) to curb the menace of deepfakes, nudifying websites have remained alarmingly ubiquitous, suggesting that there is a need for the judiciary to play a more stringent role in regulating such content.

However, most lawsuits involving the take down orders of unauthorised AI-generated deepfakes in India have based their plea on the violation of personality and publicity rights. Despite sexually explicit deepfakes forming over 90% of all deepfakes uploaded online, only a small fraction of litigation pertains to the take down of sexually-explicit deepfakes, with most petitioners making a plea to take down deepfakes that serve a commercial or political motive. As of 1st February 2026, only 9 cases in India have been reported that pertain primarily to the removal of sexually explicit deepfakes as provided in Table 1. Through this table, this piece analyses how the rationale of the courts in granting relief in such cases has varied. In doing so, the piece first analyses the judicial inadequacy in recognizing nudifying websites as a consistent problem. Then, the piece explores the rationales taken by courts in allowing the removal of deepfakes and suggests the horizontal application of Article 21 as a possible reasoning. Finally, it highlights the possible mechanisms within the existing laws to curtail the menace of AI-powered ‘nudifying websites’ that deepfake nude images using generative AI.

| S. No. | Case Name | Sex of the Aggrieved Party (Male/Female) | Predominant Rationale for Granting Relief |

| 1. | Shilpa Shetty Kundra v. Getoutlive.in, 2025 SCC OnLine Bom 5486 | Female | Article 21 (Privacy) |

| 2. | Suniel V Shetty v. John Doe S Ashok Kumar, 2025 SCC OnLine Bom 3918 | Male | Personality & Publicity Rights |

| 3. | Ranganthan Madhavan v. G. Fimlz Studioz, 2025 SCC OnLine Del 10573 | Male | Personality & Publicity Rights |

| 4. | Ajay v. Artists Planet, 2025 SCC OnLine Del 8760 | Male | Personality & Publicity Rights |

| 5. | Aishwarya Rai Bachchan v. Aishwaryaworld.com, 2025 SCC OnLine Del 5943 | Female | Personality & Publicity Rights |

| 6. | Kamya Buch v. JIX5A, 2025 SCC OnLine Del 6428 | Female | Defamation |

| 7. | Abhishek Bachchan v. Bollywood Tee Shop, 2025 SCC OnLine Del 5944 | Male | Personality & Publicity Rights |

| 8. | Akkineni Nagarjuna v. WWW.BFXXX.ORG, 2025 SCC OnLine Del 6331 | Male | Personality & Publicity Rights |

| 9. | Anil Kapoor v. Simply Life India, 2023 SCC OnLine Del 6914 | Male | Article 21 (Privacy) + Personality & Publicity Rights |

Table 1: A list of all judgements in India so far that deal with a request to remove unauthorised sexually explicit deepfakes from online websites.

The Inadequacy of Judicial Recognition of the Problem

The issue of deepfakes has never been raised in the Supreme Court of India, except in a 2025 PIL titled Narendra Kumar Goswami v. Union Of India praying inter alia for a writ of mandamus requiring the Centre to draft rules under Section 87(2)(zg) of the Information Technology Act, 2000 (‘IT Act’) mandating a 24-hour takedown mechanism for deepfakes. The Supreme Court dismissed it because the issue in question had been raised in the Delhi High court before in Chaitanya Rohilla v. Union of India (‘Rohilla’), which is currently still pending. While the Court in Rohilla directed the Union of India to form a committee to take into consideration the concern of deepfakes and the websites that host such content, the liability of nudifying websites remains elusive even when the IT Rules, 2025 will soon be notified, and the lack of clarity in judicial directions has led to an unabated exploitation of victims.

I contend that the judiciary has fallen short of calling a spade a spade. Problems such as nudifying websites are not new, but have so far managed to wholly evade judicial scrutiny despite being a major emanator of deepfakes. The closest judicial cognizance in India was in Sadhguru Jagadish Vasudev v. Igor Isakov (‘Sadhguru’), wherein the Delhi High Court recognized that certain ‘rogue websites’ employ URL-redirection and identity masking methods along with AI tools to morph images without consent, and such acts constitute a violation of personality and publicity rights.

The issue of unauthorized sexually-explicit content was first raised in Indian Courts only in 2023 in Anil Kapoor v. Simply Life India (‘Anil Kapoor’)wherein the Delhi High Court concluded that enforcing a celebrity’s personality and privacy rights entails the protection of their image from being involuntarily portrayed on pornographic websites. However, as Table 1 reveals, Courts have seldom relied on the breach of privacy as a ground for the removal for unauthorized deepfakes and have placed a stronger reliance on the violation of personality and publicity rights even in cases that involve sexually explicit deepfakes regardless of the presence or absence of a commercial motive involved.

This is because the Courts in many cases in Table 1 have placed reliance on the ongoing case of the Delhi High Court, Amitabh Bachchan v. Rajat Nagi (‘Rajat Nagi’) which awarded the petitioner therein an ad-interim injunction for the defendant’s alleged unauthorised use of the plaintiff’s status as a celebrity in a manner that was likely to cause him irreparable harm and injury to his reputation. Unfortunately, Rajat Nagi’s progress on the development of personality and publicity rights in India has eclipsed the judicial progress on giving primacy to fundamental rights in cases of unauthorised sexually-explicit deepfakes.

A classic example is Jaikishan Kakubhai Saraf Alias Jackie v. the Peppy Store & Ors. whereby the Delhi High Court differentiated between content that qualifies as legitimate artistic and economic expression, and one that violates another’s personality rights. Even though the petitioners relied on Anil Kapoor, the court only recognized the violation of personality rights while granting relief, and gave no consideration to the fact that the morphed media was sexually explicit and violated the petitioner’s fundamental right to privacy and dignity under Article 21 of the Constitution.

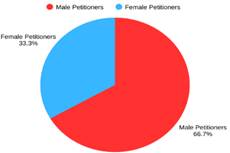

Hence, despite Anil Kapoor’s dual-approach of recognizing both the violation of a person’s privacy under Article 21 as well as their personality and publicity rights, the focus of courts on solely the latter has made it difficult for non-celebrities to enforce their rights in court upon the non-consensual publication of their AI-generated deepfakes online. Unfortunately, the judicial focus on commercial rights over the right to privacy has translated into a gender-gap in the parties filing to take down their deepfakes, as it might be harder for non-celebrity women to file a takedown suit since they cannot easily prove commercial harm as a result of the publication of their deepfakes. This explains why in six of the nine cases in Table 1, the petitioners are male celebrities even though women are otherwise significantly more likely to be targets. Figure 1 below demonstrates this discrepancy by showing that female petitioners constitute only 33.3% of all such cases, despite the alarming growth of AI-powered deepfakes disproportionately targeting women.

Figure 1: The sex ratio of the petitioners in the cases listed in Table 1 by sex.

When compared with another 2023 study which shows that 99% of all individuals targeted by pornographic deepfakes are women, it becomes quite evident that not only have men in India have had better access to litigation to take down their unauthorised sexual deepfakes, but women are also less likely to succeed if they are not a celebrity with established personality and publicity rights since that is the predominant rationale used by Courts to grant relief in such cases as per Table 1.

Hence, this policy challenge becomes an example of technology-facilitated gender-based violence which needs urgent judicial intervention. While some cases such as Aishwarya Rai Bachchan v. Aishwaryaworld.com have made passing references to the violation of the right to privacy alongside personality and publicity rights by following the model in Anil Kapoor, this position has been inconsistent, and a clear judicial approach that condemns any and all unauthorised use of sexually explicit deepfakes is the need of the hour.

Why the Right to Privacy Should be the Basis of Taking Down Sexual Deepfakes

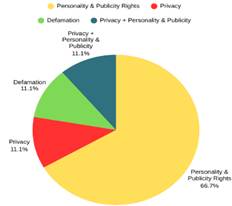

Table 1 reveals that the only case in India where relief has been granted against the publication of AI-generated sexually explicit deepfakes on the ground of them being defamatory is the Delhi High Court’s order in Kamya Buch v. JIX5A & Ors. (‘Kamya’). The court found such content to be prime facie defamatory and a patent breach of the petitioner’s fundamental rights. However, the Court fell short of specifying what fundamental right is violated through such a breach. This weakens a woman’s plea of taking down a pornographic deepfake simply on the ground of a breach of privacy under Article 21, which is a recognized fundamental right. Figure 2 shows that courts accepted a violation of Article 21 in only 22.2% of such cases despite the presence of similar facts.

Figure 2: Pie-chart showing the predominant rationale used by the respective courts in Table 1 to justify the takedown of deepfakes.

This is precisely the problem as Courts in India tend to look at the commercial loss to a petitioner even in cases of sexually explicit deepfakes. The only two cases where the right to privacy and dignity under Article 21 has been considered by courts in granting relief are Shilpa Shetty Kundra v. Getoutlive.in (‘Shilpa Shetty’) and Anil Kapoor. I theorize that the disproportionate demographic of petitioners in such cases, being male celebrities who ground their takedown claims in the violation of their commercial rights, has skewed judicial reasoning in India. As a result, courts have prioritized proprietary rights rather than recognizing the violation of privacy as the primary basis for the removal of sexual deepfakes. This claim is supported by the fact that eight out of nine, i.e., 88% of all cases in Table 1 with similar facts have had well-known celebrities or politicians as the petitioners, barring in Kamya.

Therefore, the judicial reasoning behind the removal of deepfakes in India is heavily androcentric and mercenary. While that is understandable since all eight of those petitioners argued that the deepfakes caused commercial or political harm to their image, the precedential value of the dominant commercial rationales for removal prejudices non-celebrity women who cannot prove commercial loss easily. This argument is supported by the fact that in Kamya wherein the petitioner was the only non-celebrity petitioner out of all the nine cases, the court could focus on the non-commercial ramifications of unauthorized sexually explicit deepfakes and did not ground its rationale on personality and publicity rights despite ruling in her favour. Interestingly, this is the only judgement which concluded that that the deepfakes should be taken down on account of being ‘defamatory.’ This rationale completely differs from the Courts’ position of taking down the deepfakes on account of causing ‘disrepute’ to the celebrity, as the former is construed without a commercial lens. I argue that defamation is perhaps a more gender-neutral and easily pursuable ground for non-celebrity women to file for the removal of their deepfakes, and I urge courts to consider the rationale in Kamya over that in other cases.

Horizontal Implementation of Article 21 as the Way Forward

The UNICEF in February 2026 issued a statement regarding AI-generated deepfakes stating that ‘deepfake abuse is abuse.’ Echoing this sentiment, I suggest that the courts in India recognize the inherently violative nature of non-consensual deepfakes even when no case for commercial exploitation can be made. In Subhranshu Rout @ Gugul v. State Of Odisha, the Odisha High Court, upholding the Right to be Forgotten in a case of revenge pornography, observed that if such a right is not recognized, the modesty of a woman can be outraged surreptitiously, leading to a perpetual misuse of her images in the electronic form. Following this approach, any violation of privacy should be a sufficient ground to deem the services of ‘nudifying websites’ unlawful and to grant relief to the aggrieved parties even if disrepute to their image cannot be proven. Given that the Supreme Court in Kaushal Kishor v. State of Uttar Pradesh has already made the horizontal applicability of Article 21 a reality, this route can protect every citizen from the gross violation of their right to privacy and dignity.

As for the liability of nudifying websites, I suggest that courts follow the approach taken by the Delhi High Court in T.V. Today Network Limited v. Google LLC & Ors., whereby in a matter involving deepfaked impersonations of a journalist, the Court held that the company that is responsible for the publication of fabricated content without its knowledge should be made liable. Since the content in deepfakes is fabricated, the websites that wilfully allow and promote such fabrications should be held liable. If viewed as intermediaries under Section 2(1)(w) of the IT Act, nudifying websites will inherently fail to comply with the due diligence requirements under Rule 3(1) of the IT Rules, 2025 since it requires them to prominently publish their privacy policy along with taking reasonable measures to stop the sharing of pornographic media that is invasive of bodily privacy under Rule 3(1)(b)(ii).

Finally, I advocate for a preventive approach alongside a curative one under Article 21. For this, the unauthorized publication of sexualized deepfakes should consistently be recognized by Courts as being violative of the right to privacy alongside other grounds if relevant. To curtail the menace of nudifying websites at the macro-level, a four-pronged approach should be adopted. Firstly, the decision in Sadhguru should be relied upon to give express recognition to the problem of nudifying websites. Issuing directions to order all search engines to disallow the continuation of these websites would be the next step. Secondly, Article 21 should be applied horizontally in all pleas of taking down sexually explicit deepfakes. This would protect all individuals, regardless of their celebrity status, harm to commercial interests, or sex. Thirdly, the users of nudifying websites should be penalized under Section 67A of the IT Act to further discourage deepfakes from being published. Lastly, the creators of nudifying websites should be penalized under the IT Rules, 2025 for violating their duties as intermediaries. These steps would collectively ensure that no individual suffers from the ramifications of the unauthorised publication of their sexual deepfakes.

Conclusion

Law enforcement agencies should undoubtedly ensure the implementation of Section 67A of the IT Act and take action against intermediaries which violate the compliance requirements under the IT Rules, 2025. That said, to ensure that the fundamental right to live with privacy and dignity under Article 21 can fully be realised, it is the judiciary that must ensure that all individuals are guaranteed these rights in the cyberspace, especially women since the issue of deepfakes is a form of gendered-abuse. The first step in this regard is to ensure that deepfakes are not viewed from only a commercial lens, but rather from the viewpoint of privacy as a fundamental right. This way, non-celebrity men and women will be able to avail the same remedies as male celebrities for the removal of their deepfakes. Therefore, the Courts must begin by acknowledging the problem explicitly, and consider the aspect of inclusivity in their rationale to protect petitioners filing takedown suits. To solve the challenge of deepfakes, that acknowledgement must accompany the horizontal enforcement of Article 21 without having to prove commercial harm to the image of the aggrieved party.

Ritwik Sharma is a fourth-year student at Rajiv Gandhi National University of Law, Punjab

with a keen interest in Constitutional law, Technology law, and intersectional studies. Beyond

academics, Ritwik is interested in Psychology, Literature and Mythology.

Categories: Legislation and Government Policy